After all, Google has been saying this for years back in 2015, John Mueller said “you probably shouldn't use the noindex in the robots.txt file”. In fact, Google released its robots.txt parser as an open source project along with this announcement the other day. Removed references to the deprecated Ajax Crawling Scheme.Updated formal syntax to be valid Augmented Backus-Naur Form (ABNF) per RFC5234 and to cover for UTF-8 characters in the robots.txt.Google currently enforces a size limit of 500 kibibytes (KiB), and ignores content after that limit.Google doesn't support simple errors or typos."Records" are now called "lines" or "rules".Google treats unsuccessful requests or incomplete data as a server error.If the robots.txt is unreachable for more than 30 days (5XX status code), the last cached copy of the robots.txt is used, or Google will assume no crawl restrictions.Google follows at least five redirect hops and if no robots.txt is found, Google treats it as a 404 for the robots.txt.txt now accepts all URL-based protocols."Requirements Language" section will be removed.The first proposed changes came earlier this week:

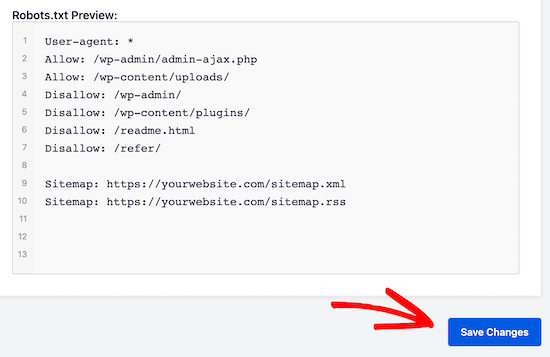

Every major search engine has adopted robots.txt as a crawling directive and from now on it will be standardised. Search Console Remove URL tool: The tool is a quick and easy method to remove a URL temporarily from Google's search results.Īs the Robots Exclusion Protocol has never been official, there were no definitive guidelines on how to keep it up-to-date or make sure a specific syntax must be followed.Disallow in robots.txt: Search engines can only index pages that they know exist, so blocking the page from being crawled usually means its content won’t be indexed.Password protection: Unless markup is used to indicate subscription or paywalled content, hiding a page behind a login will generally remove it from Google's index.404 and 410 HTTP status codes: Both status codes mean that the page doesn’t exist, this will drop the URLs from Google's index once they're crawled and processed.Noindex in robots meta tags: Supported both in the HTTP response headers and in HTML, the noindex directive is the most effective way to remove URLs from the index when crawling is allowed.For content that’s been published for a while, there are a number of alternative options. With the new update, SEOs will only be able to disallow content that they don’t want to be crawled and indexed before it goes live. Noindex (robots.txt) + disallow was the way webmasters could prevent crawlability and indexability of certain content.

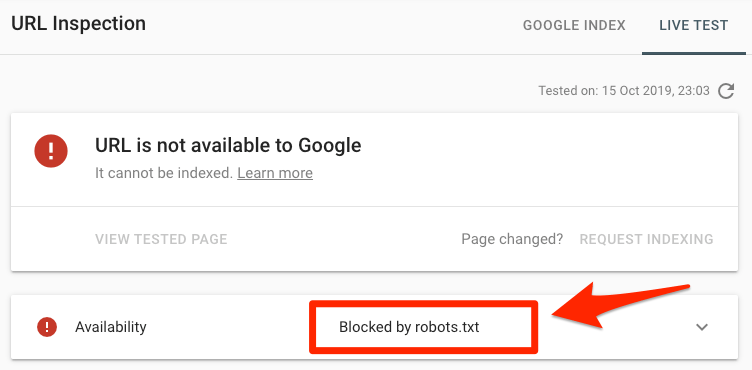

Noindex (HTML tag on the page) + disallow can’t be combined because the page is blocked by the disallow, and therefore search engines won’t crawl it and discover the tag advising not to index. Nofollow: tells them not to follow the links on your page.Disallow: tells them not to crawl your page(s).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed